Connecting

Python with

LLMs

PyLadies Monterrey

May 9, 2026 | 12:30pm CT

Introduction

Overview

- What are large language models (LLMs)?

- Connecting Python with LLMs using chatlas

- Key features: providers, tools, streaming, and multi-modal input

- Practical examples and use cases

Quick introduction to LLMs

What are Large Language Models?

- LLM = Large Language Model

- AI models trained on massive amounts of text (books, websites, code)

- Can understand and generate human-like text

- Power tools you already use: ChatGPT, Claude, Gemini

- Today: Learn how to connect your Python code to LLMs

How do they work?

How do they work?

Message roles

| Role | Description |

|---|---|

system_prompt |

Instructions from the developer (that’s you!) to set the behavior of the assistant |

user |

Messages from the person interacting with the assistant |

assistant |

The AI model’s responses to the user |

Interacting with LLMs

Turn-based conversation

Programmatic chat clients

Programmatic chat clients

- Use provider SDKs (OpenAI, Anthropic, Google)

- LangChain

- LlamaIndex

- Haystack

- chatlas

Introducing chatlas

Why chatlas?

- Simple - Clean API, minimal boilerplate, quick to learn

- Multi-provider - Switch between OpenAI, Anthropic, Google, Ollama

- Tool calling - Let LLMs execute your Python functions

- Rich content - Text, images, PDFs, structured JSON

- Stateful - Automatic conversation history tracking

Using chatlas

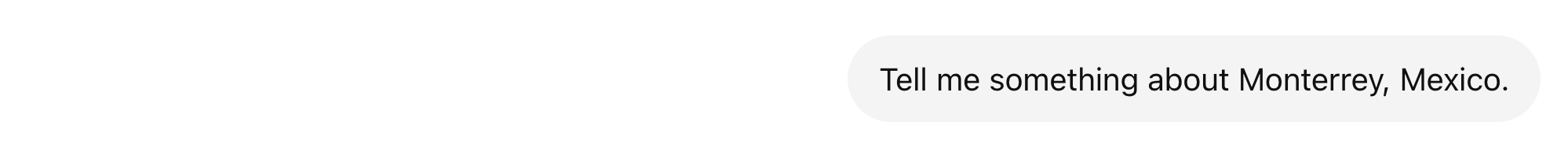

Okay, let's dive into Monterrey, Mexico! It's a really fascinating and rapidly growing city with

a unique blend of industrial power, stunning natural beauty, and a thriving cultural scene.

Here’s a breakdown of what makes it interesting:

1. The Industrial Heart of Mexico:

• Manufacturing Hub: Monterrey is the undisputed center of Mexico's automotive industry. It's

home to the largest automotive factory complex in the world – the Grupo Ángeles (Angels Group)

plant – which produces a huge percentage of vehicles sold in North America. This has made it

incredibly important for Mexico’s economy.

• Beyond Autos: However, Monterrey is diversifying. It’s a major center for manufacturing in

glass, steel, and electronics, as well as food processing and pharmaceuticals.

• Strategic Location: Its location on the Gulf of Mexico makes it a crucial trade and

transportation hub for the region.

2. Stunning Geography & Landscape:

• Mountains & Coastline: Monterrey sits in a valley surrounded by the majestic Sierra Madre

Occidental mountains. The city offers incredible views of the coastline - the Bay of the

Hudson.

• Lake Rocío: A vast lake sits just south of the city, providing a scenic landscape and

recreational opportunities.

• Natural Parks: Nearby are national parks like El Chorro and El Macầu, offering hiking,

birdwatching, and stunning natural areas.

3. Culture & Why It’s Becoming Popular:

• Modern & Trendy: Monterrey has undergone a massive revitalization in recent decades,

attracting a creative vibe and a young, stylish population. It’s a place where you’ll find

art galleries, trendy boutiques, and a lively nightlife.

• Music & Arts: Monterrey has a booming music scene, with numerous venues hosting artists from

various genres – rock, electronic, hip-hop, etc. The city is known for its creativity and

musical energy.

• Food Scene: The city's culinary scene has exploded in recent years. You’ll find a fantastic

mix of Mexican cuisine, international flavors, and innovative restaurants.

• Historical Significance: While known for its industrial pulse, the city has a rich history

dating back to the 18th century, and there are some interesting museums and historic sites.

4. Some Key Facts & Statistics:

• Population: Around 2.5 million (making it one of the largest cities in Mexico)

• GDP: High, significantly contributing to Mexico’s overall economic growth.

• Currency: Mexican Peso (MXN)

5. Things to See & Do:

• The Plaza Grande: The main square, bustling with activity.

• The Museo de la Historia de Monterrey: A must-visit for history enthusiasts.

• The "The Gate" Bridge: An iconic and impressive modern bridge.

• The Rosedal Park: A massive park with a Japanese-style garden.

• Museo de Arte Contemporáneo de Monterrey (MAC): A significant art museum.

Resources for Further Research:

• Wikipedia - Monterrey: https://en.wikipedia.org/wiki/Monterey

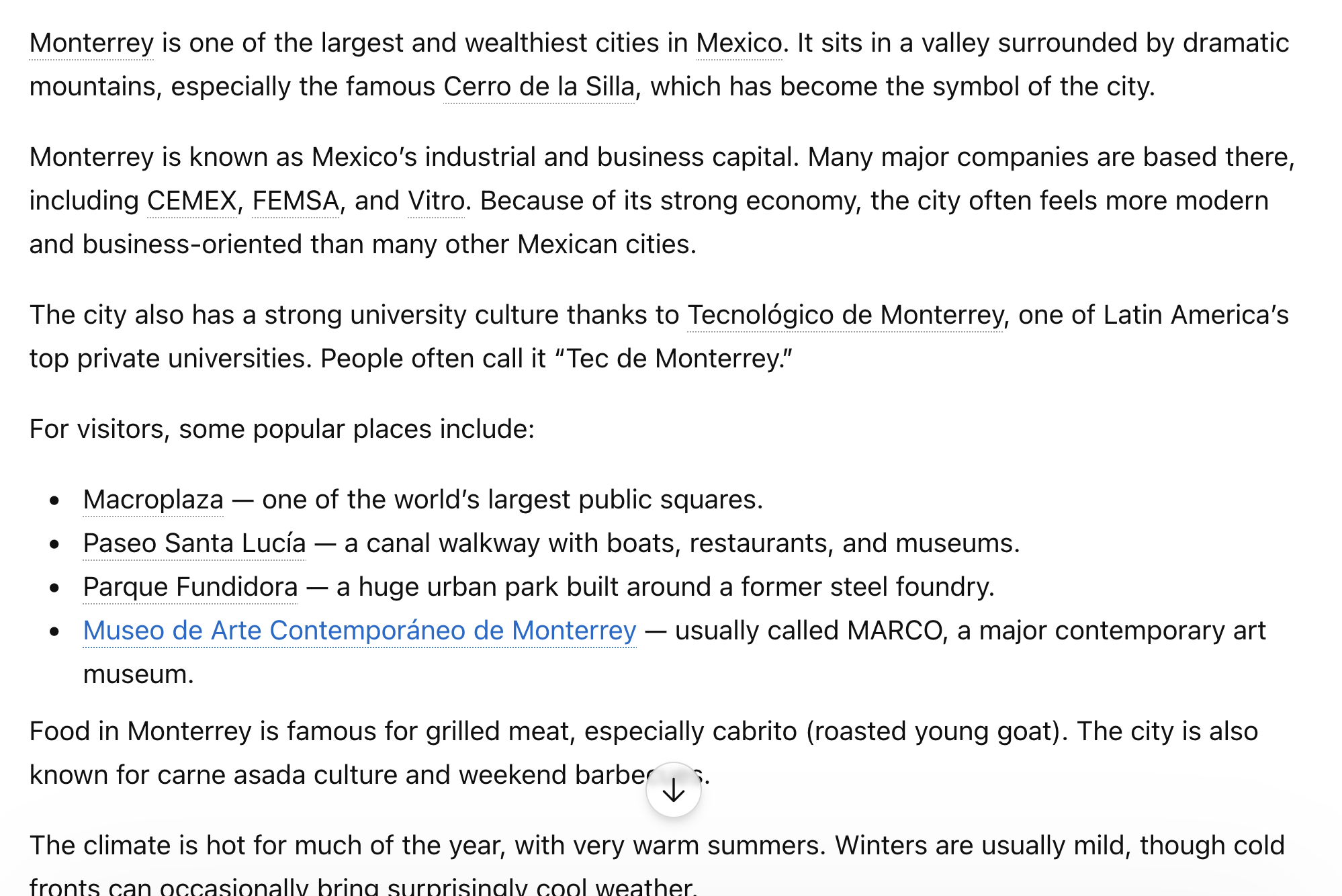

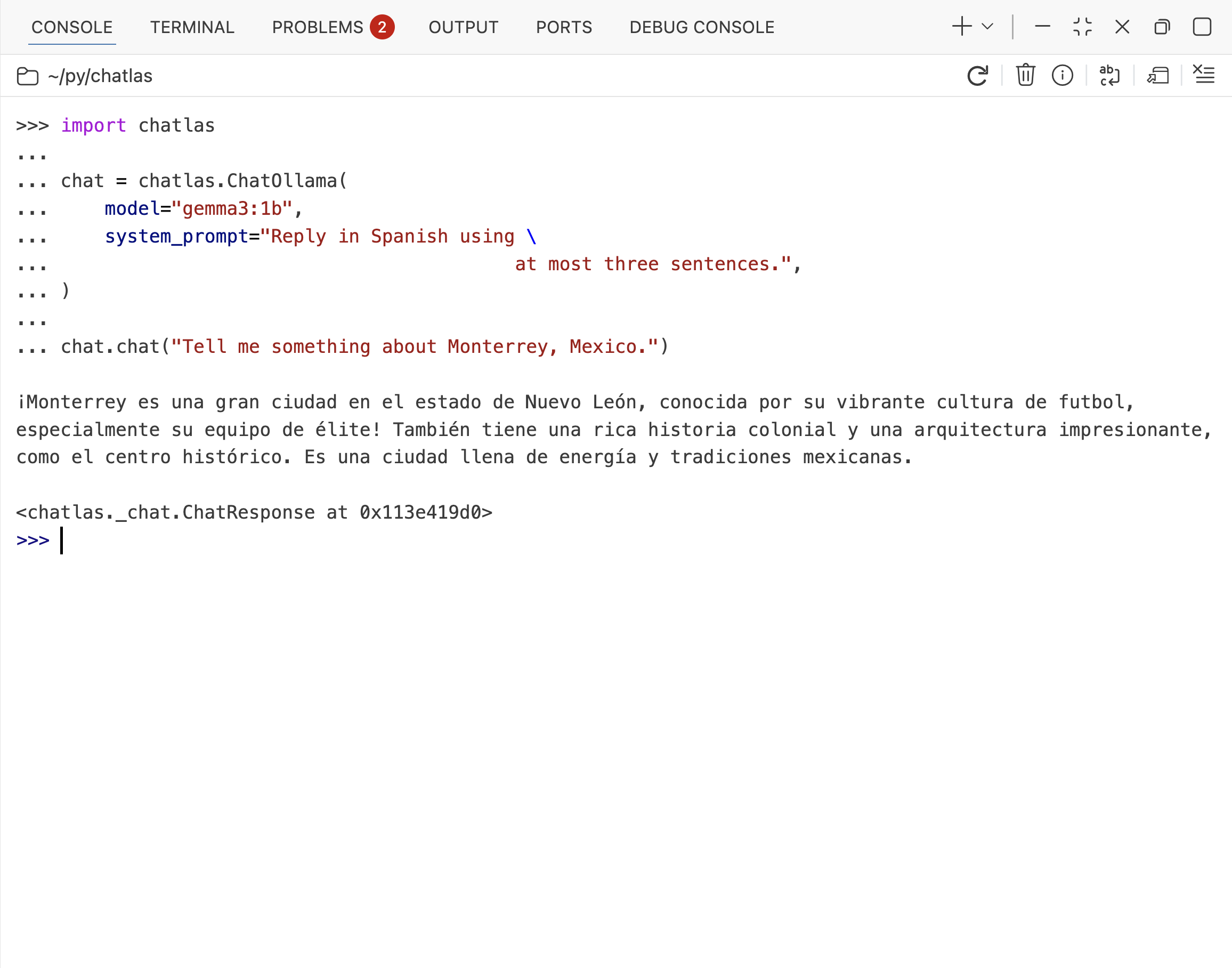

• Mexico Tourism: https://www.visitmexico.com/city/monterreyAdding a system prompt

¡Monterrey es una ciudad vibrante en el centro de México, conocida por su fútbol, especialmente el equipo de élite. Tiene una rica historia colonial y una arquitectura impresionante, incluyendo el Palacio de Bellas Artes. También es un importante centro industrial y cultural, con una escena gastronómica notable.Using chatlas in the console

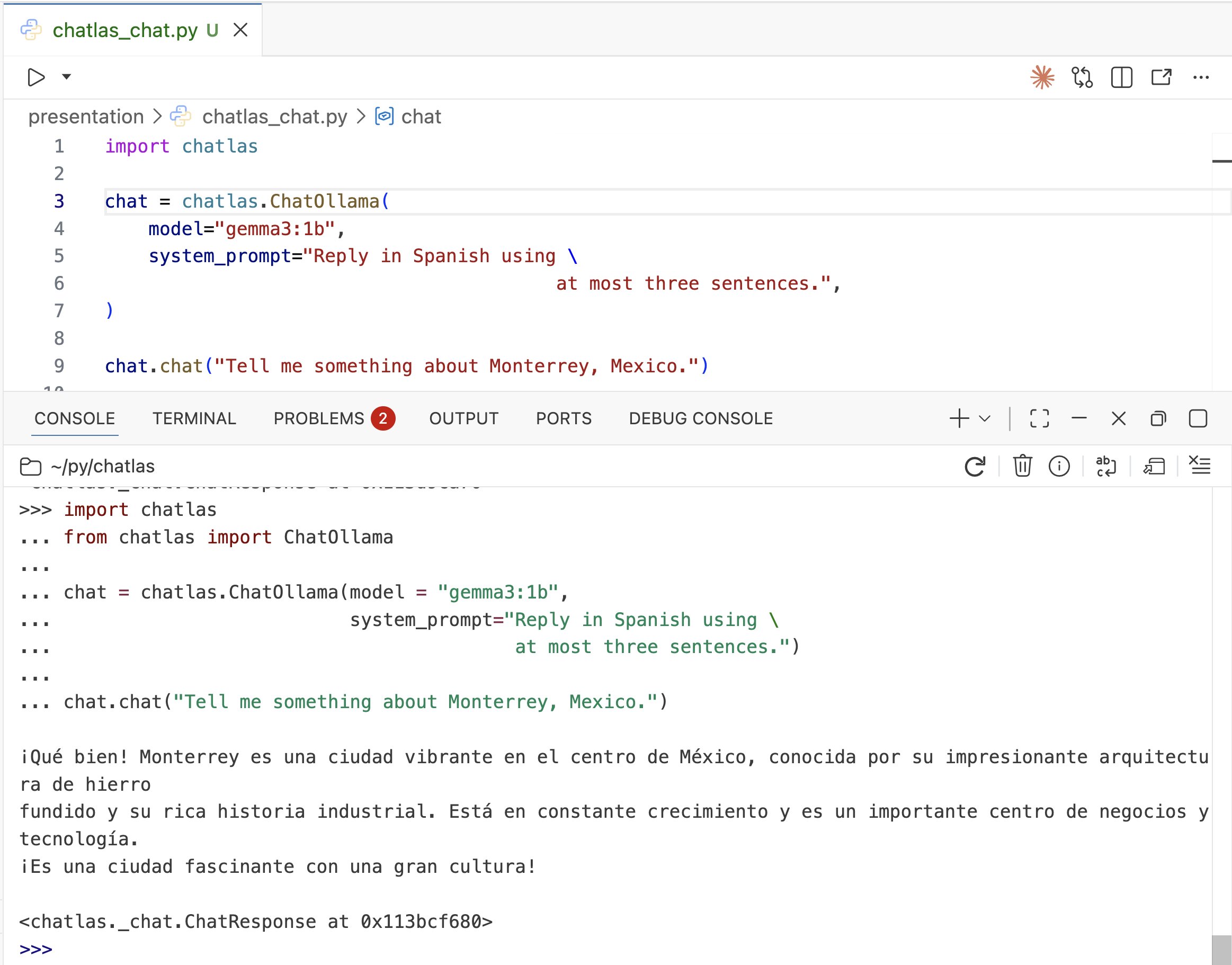

Using chatlas in a script

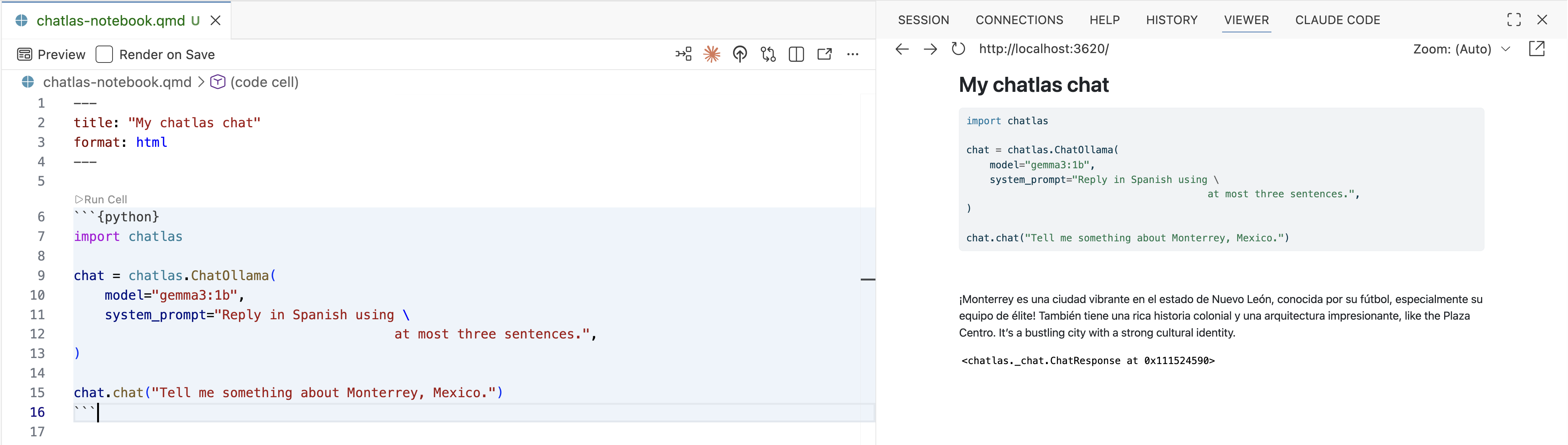

Using chatlas in a notebook

Providers and models

- Provider

- company that hosts and serves models

- Model

- a specific LLM with particular capabilities

| Provider | Example Models |

|---|---|

| OpenAI | gpt-4o, gpt-4-turbo, gpt-3.5-turbo |

| Anthropic | claude-3-5-sonnet-20241022, claude-3-opus-20240229 |

gemini-1.5-pro, gemini-1.5-flash |

|

| Azure OpenAI | gpt-4, gpt-35-turbo |

| Ollama | llama3.2, gemma2, mistral |

| Groq | llama-3.3-70b-versatile, mixtral-8x7b-32768 |

| Databricks | databricks-meta-llama-3-1-70b-instruct |

| Together | meta-llama/Meta-Llama-3.1-70B-Instruct-Turbo |

| Perplexity | llama-3.1-sonar-large-128k-online |

| GitHub | gpt-4o, claude-3.5-sonnet |

| Bedrock | anthropic.claude-3-5-sonnet-20241022-v2:0 |

How are models different?

- Content: How many tokens can you give the model?

- Speed: How many tokens per second?

- Cost: How much does it cost to use the model?

- Intelligence: How smart is the model?

- Capabilities: Vision, reasoning, tools, etc.

chatlas

Providers

ChatOpenAI()ChatAnthropic()ChatGoogle()

Local models

ChatOllama()

Enterprise

ChatBedrockAnthropic()

Multiple providers

Switch between LLM providers seamlessly:

Switching providers

import chatlas

import os

from chatlas import ChatAnthropic # requires anthropic package

chat = chatlas.ChatAnthropic(

model="claude-sonnet-4-6",

api_key=os.getenv("ANTHROPIC_API_KEY"),

system_prompt="Reply in Spanish using \

at most three sentences.",

)

chat.chat("Tell me something about Monterrey, Mexico.")Monterrey es la capital del estado de Nuevo León y es considerada una de las ciudades más industriales y económicamente importantes de México. Es conocida por su impresionante paisaje montañoso, especialmente el icónico Cerro de la Silla, que simboliza la ciudad. Además, Monterrey es reconocida por su vibrante cultura, su gastronomía y sus importantes universidades, como el Tecnológico de Monterrey.Tool calling

Accessing external functions

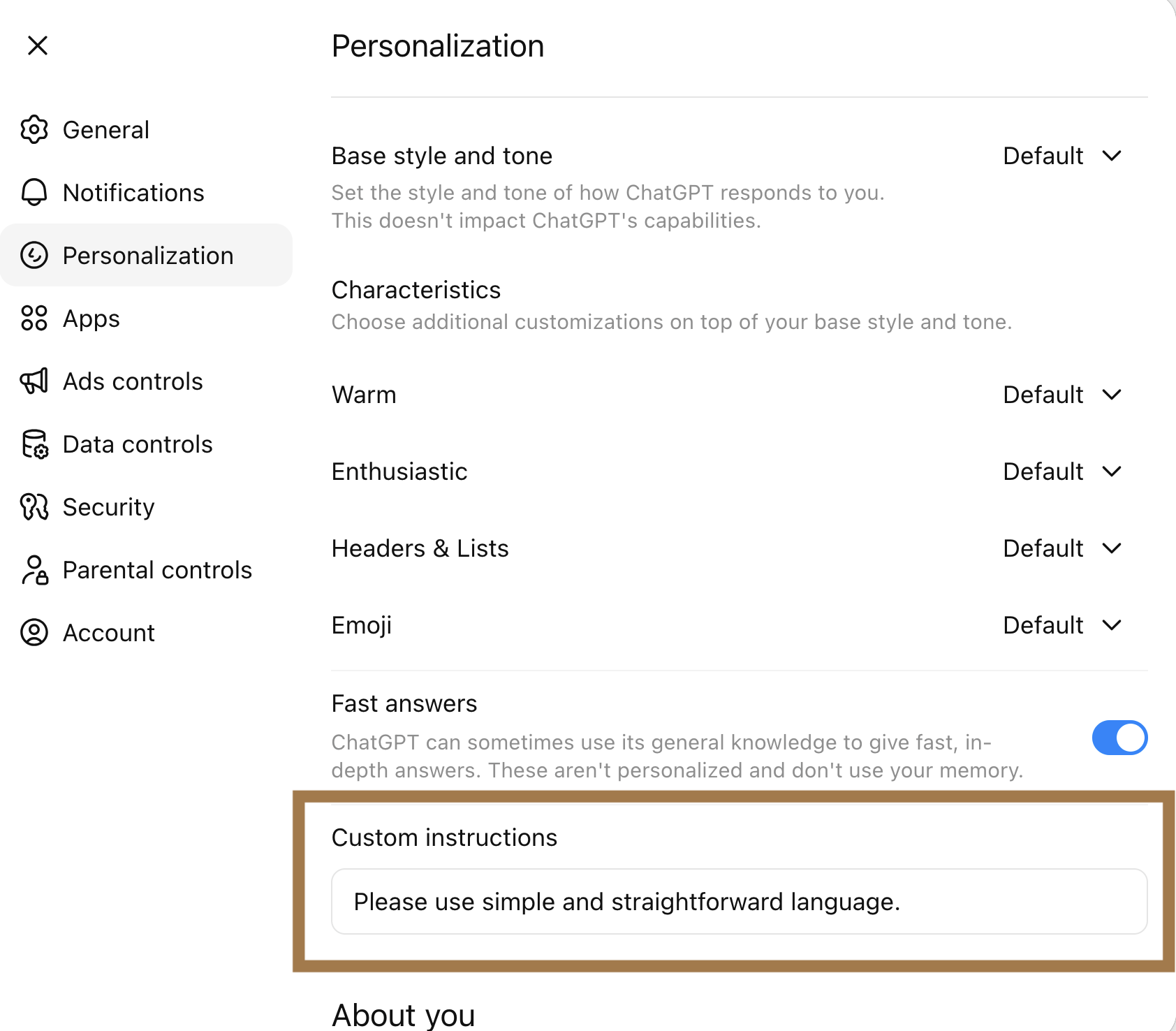

I don't have access to real-time weather data, so I can't tell you the current conditions in Monterrey,

Mexico.

However, I can share that Monterrey typically has:

• Hot summers (May-September) with temperatures often exceeding 95°F (35°C)

• Mild winters (December-February) with temperatures around 50-70°F (10-21°C)

• Low rainfall overall, though most rain falls June-September

• Semi-arid climate with plenty of sunshine year-round

For current conditions, I'd recommend checking:

• Weather.com

• Weather apps on your phone

• Local Monterrey news websites

• Google weather search

Is there something specific about Monterrey's weather you'd like to know about?Accessing external functions

Create a tool function:

import requests

def get_current_weather(lat: float, lng: float):

"""

Get the current weather given a latitude and longitude.

Parameters

----------

lat: The latitude of the location.

lng: The longitude of the location.

"""

lat_lng = f"latitude={lat}&longitude={lng}"

url = f"https://api.open-meteo.com/v1/forecast?{lat_lng}¤t=temperature_2m,wind_speed_10m&hourly=temperature_2m,relative_humidity_2m,wind_speed_10m"

response = requests.get(url)

return response.json()["current"]Accessing external functions

Register and call the tool:

# 🔧 tool request (toolu_01MgUEzjRnY9Sx1TJREebgTJ)

get_current_weather(lat=25.6866, lng=-100.3161)

# ✅ tool result (toolu_01MgUEzjRnY9Sx1TJREebgTJ)

{ 'time': '2026-05-09T13:00',

'interval': 900,

'temperature_2m': 23.1,

'wind_speed_10m': 4.5}

The current weather in Monterrey, Mexico is:

• Temperature: 23.1°C (about 74°F)

• Wind Speed: 4.5 km/h (light breeze)

• Time: 1:00 PM local time

It's a pleasant day in Monterrey with comfortable temperatures and light winds!Multi-modal input

Image input

Here's a concise explanation of the image:

This photo shows the iconic Popocatépetl volcano in Mexico, rising dramatically in the background. It's framed by a sprawling cityscape – likely Mexico City – and a dramatic sky filled with puffy, layered clouds.

The volcano's distinctive sharp peaks are clearly visible.PDF input

The image displays a natural landscape with a large, rounded mountain situated near what appears to be an

urban area. In the foreground are various trees and clouds, giving the impression of a hazy or overcast day.

Behind the trees, we can see a cityscape of buildings at varying distances, culminating in the tallest

structures that resemble those in a major metropolitan area with high-rise constructions. In the distant

background, centered among the clouds, is a mountain peak that stands out due to its relative height and

isolation. The top right corner features an image of a volcano-like mountain on a glossy surface, suggesting

the photo may have been taken from a screen or print. This is a cityscape as viewed looking toward the coast

or another body of water, with the natural scenery providing a serene counterpoint to the bustling urban

environment below.Streaming

Responding one chunk at a time

That

'

s

a

cool

name

,

Isabella

!

It

has

a

nice

sound

to

it

.

😊

Is

there

anything

you

'

d

like

to

talk

about

,

or

were

you

just

letting

me

know

your

name

?Chat history

Remembering past interactions

Your name is Isabella! 😊 I asked you what your name was earlier.

It's a bit of a trick question! 😄

Would you like to tell me something about yourself, Isabella?Practical examples and use cases

Example: Batch document summarization

Process dozens of research papers and create a summary CSV:

from pathlib import Path

from pydantic import BaseModel

import pandas as pd

chat = chatlas.ChatGoogle(model="gemini-1.5-flash")

class Paper(BaseModel):

"""Extract key information from this research paper."""

title: str

authors: list[str]

year: int

key_findings: str # 1-2 sentences

# Process all PDFs in a folder

papers = []

for pdf_path in Path("research_papers/").glob("*.pdf"):

result = chat.chat_structured(

chatlas.content_pdf_file(pdf_path), data_model=Paper

)

papers.append(result.model_dump())

# Save to CSV for analysis

df = pd.DataFrame(papers)

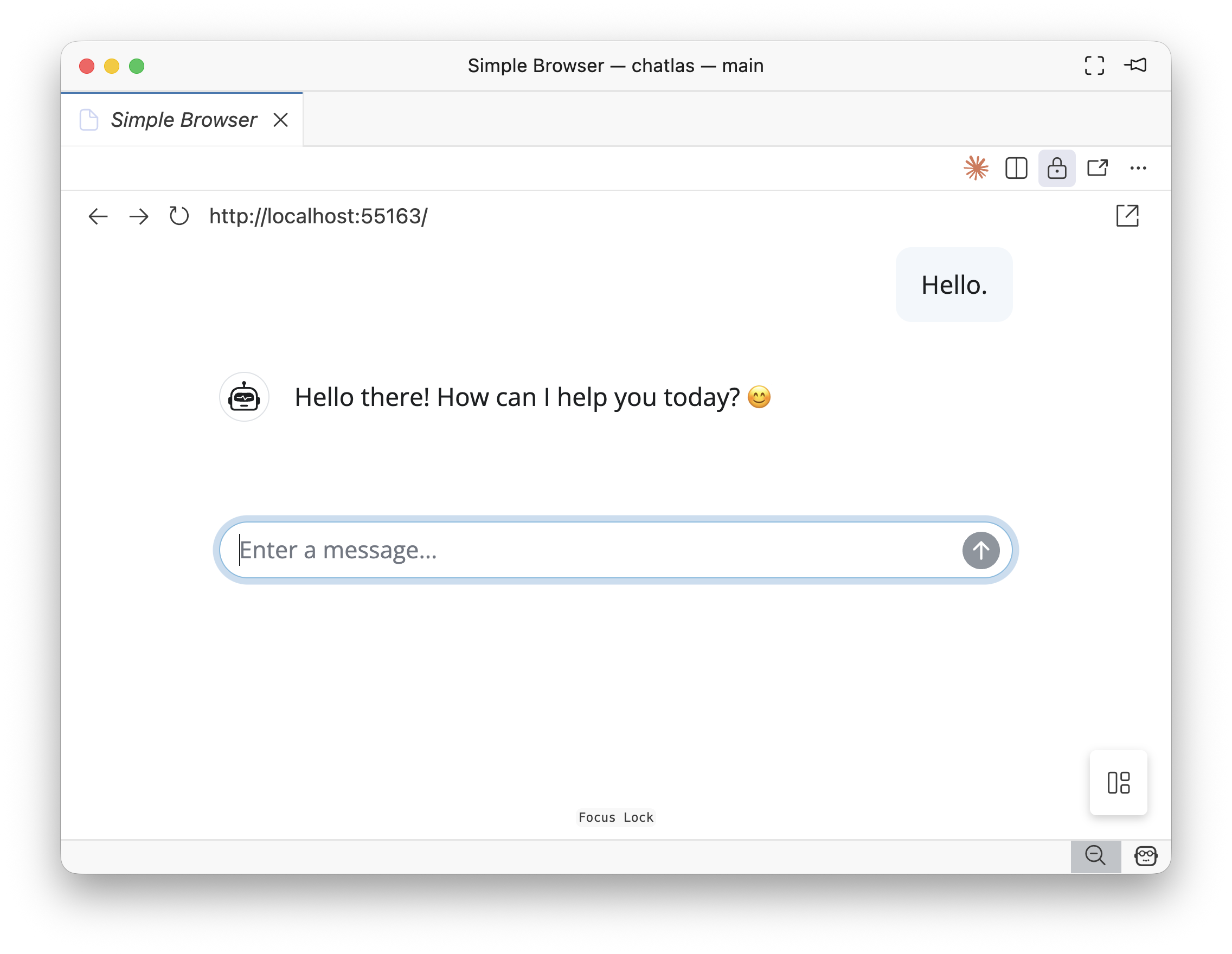

df.to_csv("paper_summaries.csv", index=False)Creating a chatbot with chatlas with Shiny

Creating a chatbot with chatlas with Shiny

Why build with chatlas?

Generic chatbot (ChatGPT, Claude):

❌ Can’t access your local data

❌ Can’t query your databases

❌ Can’t integrate with your APIs

❌ Can’t automate workflows

Custom chatbot with chatlas:

✅ Works with YOUR data

✅ Integrates with YOUR tools

✅ Automates YOUR workflows

Example: PyLadies Monterrey event assistant

What if you could ask questions about local events?

- “What events do we have about AI?”

- “Tell me about the TEC de Monterrey venue”

- “What’s our average event attendance?”

This requires integration with local data!

Connect the chatbot to your data

app.py

# Your local event database

EVENTS = [

{

"name": "Python & LLMs Workshop",

"date": "2026-05-09",

"location": "TEC de Monterrey",

"attendees": 45,

"topic": "chatlas and AI"

},

# ... more events

]

def get_upcoming_events(topic: str = None):

"""

Get upcoming PyLadies Monterrey events.

Parameters

----------

topic : Filter by topic (e.g., "AI", "data", "web")

"""

return [e for e in EVENTS if topic.lower() in e["topic"].lower()]

chat = chatlas.ChatAnthropic(model="claude-sonnet-4-5")

chat.register_tool(get_upcoming_events)

# Now it can answer questions about YOUR events!

chat.chat("What events do we have about AI?")The chatbot uses your function

# 🔧 tool request

get_upcoming_events(topic='AI')

# ✅ tool result

[{'name': 'Python & LLMs Workshop',

'date': '2026-05-09',

'location': 'TEC de Monterrey',

'attendees': 45,

'topic': 'chatlas and AI'}]

¡Hay un evento sobre IA! Es el "Python & LLMs Workshop" que se

llevará a cabo el 9 de mayo de 2026 en el TEC de Monterrey.

Ya hay 45 personas registradas. ¿Te gustaría más detalles?Building custom chatbots with Shiny

Add the tools to a Shiny chat interface:

from chatlas import ChatAnthropic

from shiny.express import ui

# Create chat UI

chat = ui.Chat(id="chat", messages=[

{"role": "assistant",

"content": "¡Hola! Ask me about PyLadies Monterrey events!"}

])

chat.ui()

# Create chatbot with registered tools

chat_model = ChatAnthropic(model="claude-sonnet-4-5")

chat_model.register_tool(get_upcoming_events)

chat_model.register_tool(get_venue_info)

@chat.on_user_submit

async def handle_user_input():

await chat.append_message_stream(chat_model.stream(chat.user_input()))Demo time!

PyLadies Monterrey Event Assistant

Other use cases

- Data analysis: Query databases, generate reports

- Document processing: Extract info from PDFs, summarize research

- API integration: Check weather, send emails, post to Slack

- Workflow automation: Schedule tasks, monitor systems

- Custom agents: Code review bots, customer support, tutoring